By: Sreekanth Sasidharan UTO & AVP, Infosys and LFN Board member

Achieving higher levels of network autonomy requires more than just technological advancements; it demands a deliberate and structured approach that stimulates both acceleration and a positive spiral in organizational transformation. As networks become increasingly complex and the industry moves toward zero-touch operations, the push for autonomous networks is intensifying. This environment not only motivates Telcos and enterprises to search for viable roadmaps toward autonomy but also underscores the need for foundational capabilities that support this journey.

The shift toward autonomy is not abrupt; rather, it is a continuum that builds upon existing processes and technologies. Artificial intelligence, for example, acts as a catalyst in this transformation, enabling organizations to progress along the autonomy continuum. To foster a positive spiral—where incremental improvements drive further advancements—organizations must recognize and invest in the prerequisites and standards that define each level of autonomy. By aligning with frameworks such as the NGMN autonomy model and the Cloud Native Maturity Model (CNMM), enterprises can systematically move toward higher levels of autonomy, creating a cycle of continuous improvement and innovation.

This article explores the different standards defining the levels of autonomy and its prerequisites, as well as the positive impact of LFN projects of Essedum and Salus in realizing these levels.

Correlating different standards for Autonomy

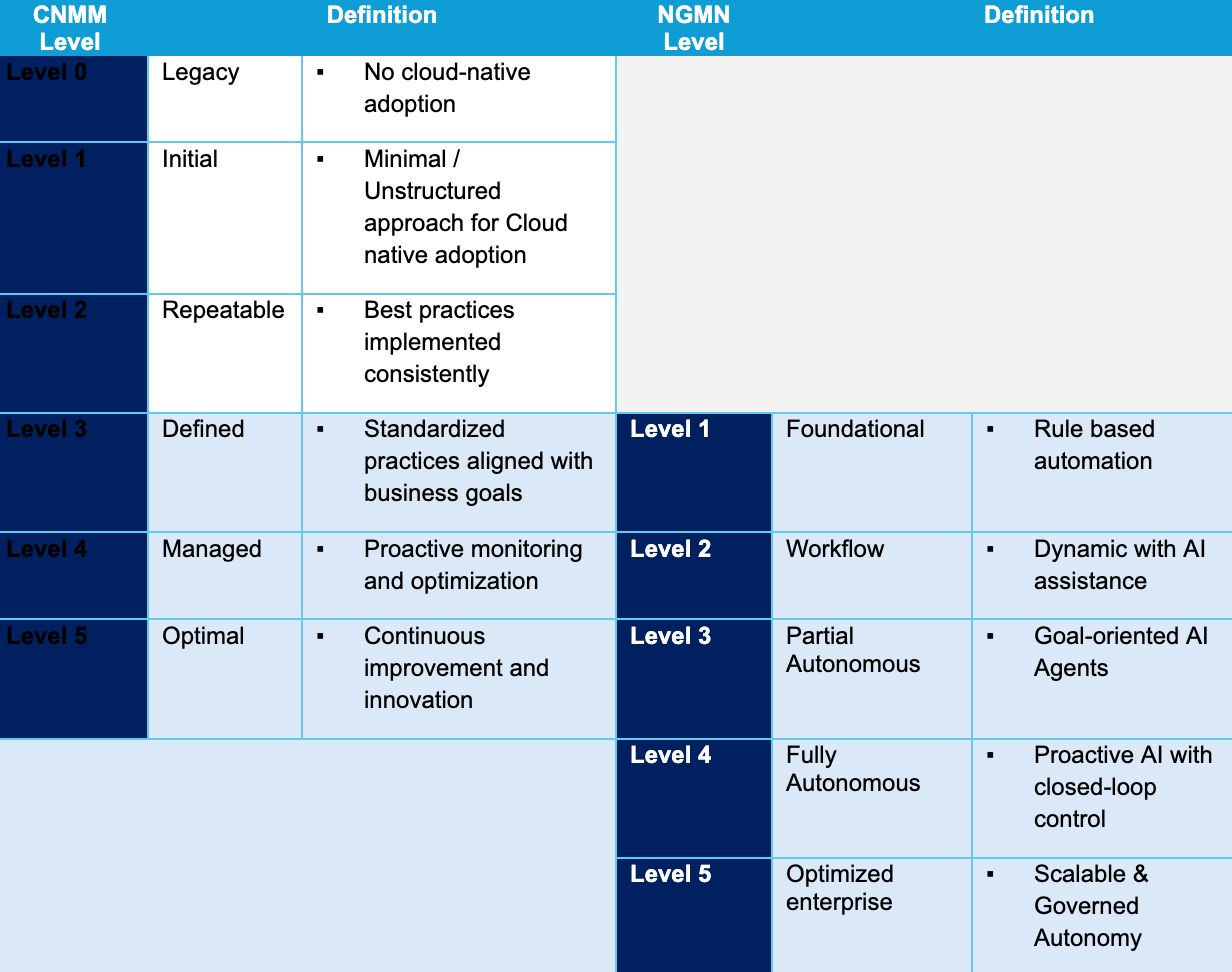

Recently, NGMN (Next Generation Mobile Network Alliance) has published a paper on the Agentic AI based Operating models Cloud-Native Next Chapter – Agentic AI-Based Operating Models – NGMN. That paper had an interesting correlation between NGMN autonomy model and Cloud Native Computing Foundation (CNCF), Cloud Native Maturity Model (CNMM). One of my key learnings from that paper is the continuum and overlap between CNMM levels and NGMN autonomy levels. This can be better summarized as below.

The essence of this analysis is that the CNMM and NGMN levels are rightfully sliding, with CNMM being the precursor for NGMN. Level 2 of CNMM demands consistency and best practice in cloud nativeness. Meaning, the organization has a well-defined cloud native strategy, there is good amount of cloud nativeness available at the resource layer, and there is a consistent roadmap. This in-turn is essential for the level 1 and 2 of NGMN where the expectation is to have AI enabled wok flows that can operate on the resource layer. For any kind of dynamic resource management using AI, the underlying resource layer has to be flexible, which is what Level 2 of CNMM promises. As the autonomy expectation of NGMN moves forward, the expectation is that the resource layer self-adjusts to the intent demands from upper layers. At Autonomy level 4, the resource layer has to self-heal and self-correct the changes impacting intent and guarantee the intent.

The above can be better explained with an example. Imagine that there is a 5G edge workload serving a business usecase which always demands consistent same bandwidth and QoS. The intent for this is programmed with this requirement. Because of the unpredictable nature of the network, the physical conditions can change. Mind that in a connected network, there will always be physical infrastructure which is inflexible (like Transport network) beyond certain extent. At Level 3 of NGMN, the AI intelligent network brain will predict this condition, identify that there is potential intent impact, identifies the root-cause, finds the recovery as an adjacent edge location which is unimpacted by the traffic condition. This requires dynamic resource spinning, workload onboarding, and bring-up. This kind of self-heal is only possible if the CNMM has reached beyond level 4. Secondly, for both the resource layer and upper network and systems layer to work in tandem and autonomously, orchestration of data, models, and agents is required. Thirdly, both the CNMM and NGMN also emphasize observability and optimization at the higher levels, which is important for sustaining the levels.

Remarkably similar is expectation from TMF 1252, Autonomous Network Levels Evaluation Methodology, wherein the expectation at Level 5 is Full autonomy with awareness, decision, execution, and intent management are autonomous.

Summarizing

- Autonomy levels are a continuum and start from very foundational capabilities

- Resource level flexibility has to do a lot with cloud nativeness

- Orchestration between data, model, and agent is necessary

- MLOps and FinOps are required for optimization and sustaining

How Essedum and Salus impacts autonomy journey

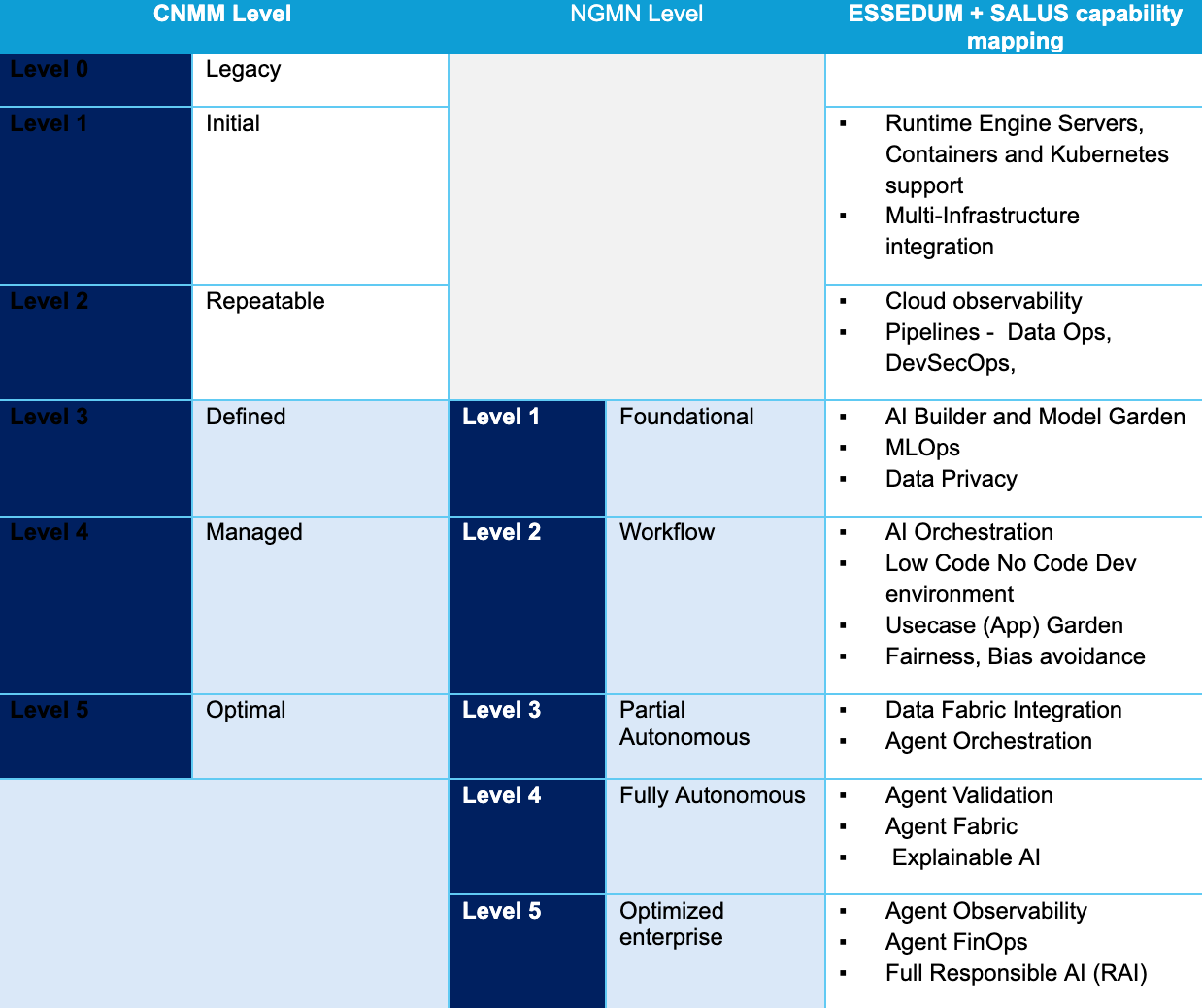

As described in the previous section, to achieve the higher levels of autonomy, the CNMM and NGMN defined capabilities have to be working in conjunction, especially from an AI perspective. LFN projects of Essedum and Salus help to enable and accelerate this. Below is a view of the Essedum and Salus capabilities mapped to the CNMM and NGMN level expectations.

Key capability differentiation, which Essedum and Salus bring, aligning to the CNMM and NGMN levels, can be listed as below,

- Cloud native design, integration with multi-infrastructures

- Data integration

- Multilevel orchestration – Data, AI, and Agents

- Low Code & No Code interfaces

- Use case orchestration

- Responsible AI

- Observability – MLOps, FinOps

- AAIF integration (MCP)

These capabilities start from Level 1 of CNMN, extending all the way up to Level 5 of NGMN Autonomy model.

To see how these capabilities can be used in a real case, I will use the same example as in the above section and explain. In the case of a self-healing of edge workload, first of all, there has to be an AI model that monitors parameters and predicts a network condition change and its impact. This requires integration of data with the model. For the root cause analysis and action decisions, a series of agents needs to be called in a dynamic sequence, and this requires agentic orchestration. Further, to translate the decision and communicate with the different network applications, the orchestration engine needs to use MCP. And all these have to be done with MLOps and FinOPs in mind and under a Responsible AI (RAI) framework.

Conclusion

In summary, reaching higher levels of network autonomy is a step-by-step transformation driven by AI, where incremental capability improvements create a “positive spiral” of further automation and innovation. The correlation between autonomy frameworks (NGMN, CNCF’s Cloud Native Maturity Model, and TMF 1252) emphasizes that strong cloud-native maturity and resource-layer flexibility are prerequisites for advanced AI-driven workflows, closed-loop control, and self-healing networks. Essedum and Salus projects accelerate this journey by providing capabilities such as multi-infrastructure cloud-native foundations, data/model/agent orchestration, and supporting practices like MLOps, FinOps, observability, and Responsible AI, and hence an open source and open network way of implementing Autonomy in networks.